We all know the stereotype of lawyers and high-level executives working six or seven day weeks, often for ten or more hours per day. But we also know that prestigious positions come with high salaries and lots of benefits. On the other hand consider those who work lower level positions. These employees are often paid hourly and are not encouraged to work more than 40 hours per week. The result of this matrix is that the rich end up with lots of money but no time to spend it, while the poor have lots of time but no money to spend. We will now examine whether this is truly a stereotype, or whether it is actually true.

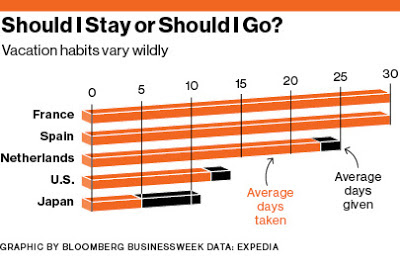

First let us consider the fact that the United States differs sharply from other western nations. In Europe, most employees get more than four weeks of vacation per year, in the United States, the average employee gets 12 days off per year. Interestingly enough, Americans, like the Japanese, do not use all of their vacation days. Note the photo below that shows the difference between America and Europe. Fifty years ago, this tendency for Americans to work more than Europeans did not exist.

First let us consider the fact that the United States differs sharply from other western nations. In Europe, most employees get more than four weeks of vacation per year, in the United States, the average employee gets 12 days off per year. Interestingly enough, Americans, like the Japanese, do not use all of their vacation days. Note the photo below that shows the difference between America and Europe. Fifty years ago, this tendency for Americans to work more than Europeans did not exist.

Now it is important to look at the differences that exist within America, comparing those at the top of the earning pyramid with those at the bottom.

Between 1985 and 2007, total leisure time for the most educated Americans, who typically earn the most money, declined by 1.2 hours to 33 hours per week. During the same period, leisure for the least educated Americans increased by 2.5 hours to 39 hours per week. Not only is there a significant difference between the highest and lowest earners, but this difference has increased rather than decreased over time.

This difference may reflect the tendency for salaried employees to feel pressured into working longer hours as they obtain extra responsibilities. Employers try to squeeze as much work out of these high level employees as possible because they are a high fixed cost; working 40 or 80 hours per week makes no difference to the payout from the employer.

The wage gap may also be partially explained by this same phenomenon. The difference between the highest and lowest quintiles of wage earners in America is now greater than ever before. Companies wish to save money by hiring the fewest number of high salary wage earners. For example, a company needs 80 hours of work per week for a particularly challenging job. They could either higher two executives at $150,000 per year for 40 hours per week, or they could force one person to complete the work of two people over 80 hours and pay $250,000. The company sees an annual $50,000 cost savings theoretically without a decrease in quality. Because this is a standard practice, the employee takes the job with the high salary and becomes overworked.

Meanwhile, lower-level employees wages have risen only slightly over the past 30 or so years. During this time, gains in technology and education have allowed these employees to become much more productive. Some of the productivity gains are seen by salary increases, while others are forwarded to employees in the form of fewer working hours. Employers for hourly workers have no desire to work these employees for more than 40 hours per week because they will have to pay overtime.

Will this trend continue over time, or will employers begin to reduce the working hours of the highest earners, therefore reducing the wage gap? Stay tuned for a post about why working more than 40 hours per week is actually less productive.